Complex or Irregular Lung Nodule Detection from CT Scans

Sept 2023 → May 2024

Lung cancer kills with particular efficiency partly because it so often goes unnoticed until it has made itself unmissable. This project assembles three deep learning architectures, V-Net, YOLOv5s, and DETR, into a single detection pipeline that finds the nodules a radiologist might miss and declines to invent the ones that are not there.

The Problem Worth Solving

The radiologist examining a chest CT scan is being asked to find, in a stack of several hundred axial slices, an object that may be a few millimeters across, that may be partially obscured by surrounding tissue, that may present as a solid mass or as a faint ground-glass opacity barely distinguishable from the lung parenchyma around it, and that may or may not be malignant. This is, to put the matter plainly, an unreasonable thing to ask of any human visual system working under clinical time pressure. The consequences of a missed nodule are grave. The consequences of a false positive are not trivial either, sending a patient down a path of unnecessary biopsy and procedural anxiety.

Automated detection does not replace the radiologist. It handles the exhausting and repetitive labor of surveying every slice, surfaces the candidates that warrant attention, and leaves the judgment to the clinician who is equipped to exercise it. It is also the precondition for telemedicine to function in underserved regions where the radiologist is not in the same building, or the same continent, as the scanner.

The System

No single architecture handles all of the problem's demands with equal facility, which is why the system deploys three.

V-Net

V-Net is a three-dimensional convolutional network designed for volumetric medical image segmentation, which is to say it understands that a CT scan is not a collection of independent images but a continuous representation of a volume of tissue. Where a two-dimensional model sees slices, V-Net sees the nodule as the three-dimensional object it actually is, segmenting it with residual connections that allow the network to learn the complex and irregular shapes that confound simpler approaches. The inputs are CT scan images paired with their segmentation masks; the output is a precise delineation of where the nodule ends and the lung begins.

YOLOv5s

V-Net segments. YOLOv5s locates. Processing each CT slice as an independent image, the model divides the frame into an N×N grid and assigns to each cell a probability that it contains a nodule, along with the bounding box coordinates that describe it. The single-pass architecture makes inference fast enough for clinical use, and the integration with V-Net's segmentation output means the two models are mutually correcting rather than operating in ignorance of each other.

YOLOv5s with Shape Modeling

Localization alone is insufficient when the pathology of interest is defined as much by its morphology as by its presence. The shape modeling extension computes local geometric descriptors for each detected candidate, among them compactness, eccentricity, and solidity, which together allow the system to distinguish a solid nodule from a ground-glass opacity and to discard the false positives that a purely intensity-based detector would confidently endorse.

DETR

The Detection Transformer brings a fundamentally different inductive bias to the problem. Where YOLO divides the image into a grid, DETR uses a set of learned queries that attend to the image globally, producing class labels and bounding boxes through a multi-layer perceptron that reasons about the whole scene rather than its local patches. The effect is a reduction in the false positives that slip through the other models, particularly for nodules situated near the pleural wall or the mediastinum, where local context is insufficient.

Implementation

The dataset is the Lung Image Database Consortium (LIDC-IDRI) collection, which provides CT scans with nodule annotations from multiple radiologists. Images were resized to 256×256 pixels, a resolution that preserves the diagnostic detail while remaining tractable for training. Nodule presence was encoded as a binary label per slice, and the data was divided into training, validation, and test splits before augmentation with rotations, flips, shear transformations, and zoom to discourage the models from memorizing the particulars of the training distribution.

V-Net was trained with an encoder-decoder architecture using batch normalization and ReLU activations throughout. YOLOv5s was trained for single-pass bounding box prediction. DETR was trained with its standard transformer encoder-decoder configuration.

Results

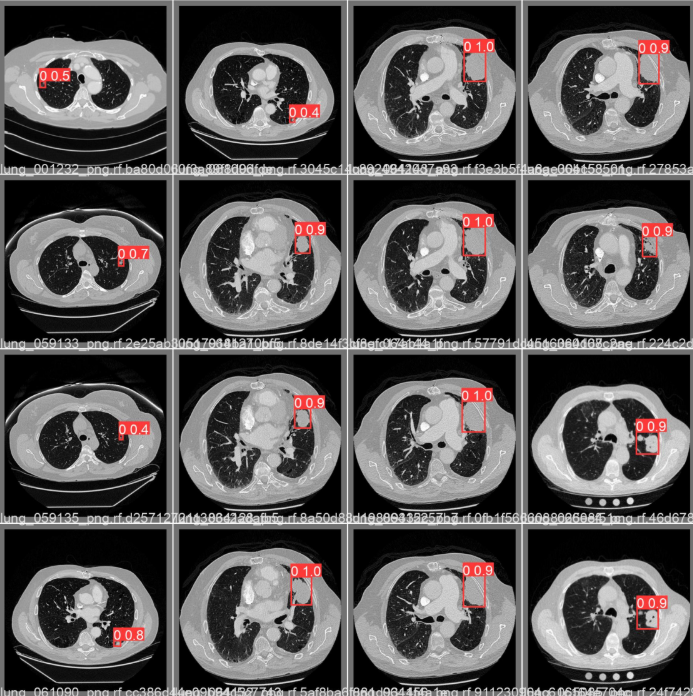

Sidenote: Sample detections by YOLOv5s

Sidenote: Sample detections by YOLOv5sV-Net achieved strong segmentation performance as measured by Dice coefficient and Intersection over Union, the standard metrics for evaluating whether the predicted mask and the ground-truth mask are actually describing the same region of tissue. YOLOv5s demonstrated accurate multi-nodule detection per slice at inference speeds compatible with a clinical workflow. DETR's contribution was primarily in suppressing the false positives that the faster models, optimized for recall, inevitably produce.

What Comes Next

The immediate horizon holds three things worth pursuing. Clinical integration would embed the pipeline into a PACS workflow so that detections appear alongside the radiologist's normal reading environment rather than in a separate research tool. Dataset expansion across scanners and institutions would address the generalization problem that haunts every model trained on a single-source collection. And the continued maturation of vision transformers offers a plausible path to feature representations that handle the more pathological nodule presentations that the current models still find difficult.

Tools and Data

The system is implemented in Python with TensorFlow and Keras, using Scikit-learn for data splitting and preprocessing, NumPy for numerical operations, and the Ultralytics package for YOLOv5s. The dataset is the publicly available LIDC-IDRI collection.

View PDF